We are beginning to live alongside systems that can answer almost anything.

Ask a question.

Receive a recommendation.

Generate a summary.

Approve a decision. Move on.

But there is a quiet distinction emerging, one that matters more than capability.

Some systems give answers while helping us understand how those answers came to be.

Others provide results that arrive fully formed, efficient, and authoritative, yet strangely unreadable.

This is the difference between AI that explains and AI that obscures.

The ethical question is not whether artificial intelligence can produce outcomes.

It is whether the people affected by those outcomes are allowed to see, question, and interpret the reasoning behind them.

Transparency, in this sense, is not a technical feature. It is an expression of care.

The Seduction of Seamlessness ✨

Modern technology design prizes frictionlessness.

The ideal system is fast, invisible, and effortless.

Complexity is hidden so thoroughly that users never have to confront it.

This aesthetic of seamlessness is often celebrated as progress.

Yet it carries a cost.

When complexity disappears from view, so does accountability.

Decisions appear inevitable rather than constructed.

Interfaces smooth over uncertainty, presenting outputs as if they were neutral facts rather than probabilistic judgments shaped by data, assumptions, and constraints.

What feels like elegance can function as concealment.

Ethical design sometimes requires the opposite impulse: to slow interactions down just enough for understanding to occur.

What Explainability Really Means 🔍

Explainability is often framed narrowly, as if it means exposing algorithms or publishing technical documentation.

But most people do not need to read code to understand how a system affects their lives.

True explainability is about legibility.

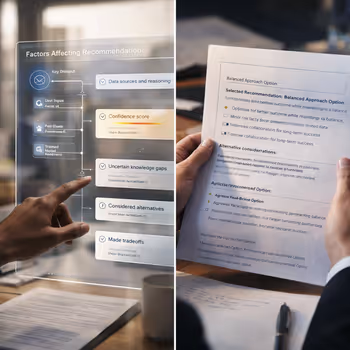

A legible system communicates:

- What influenced this result

- How confident it is

- Where its knowledge ends

- What tradeoffs were made

- What alternatives might exist

This is not transparency for engineers.

It is transparency for humans living with consequences.

Without legibility, AI subtly shifts from being a tool we use to an authority we defer to.

When AI Obscures ⚠️

Opaque systems change behavior in ways that are easy to miss.

When reasoning is hidden:

- People stop asking questions.

- Outputs gain unearned authority.

- Responsibility diffuses—no one feels fully accountable.

- Errors become harder to detect because the path that produced them is invisible.

This dynamic is already familiar in other domains.

Systems that cannot be interrogated tend to accumulate power quietly.

They reshape decisions without inviting participation.

Obscurity does not merely limit understanding.

It redistributes agency.

Transparency as a Form of Care 🫶

Care, in human relationships, is expressed through clarity.

We explain ourselves not because we must, but because we recognize others are affected by what we do.

The same principle applies to technological systems.

Designing AI that explains itself signals:

- Respect for the user’s judgment

- Willingness to share responsibility

- Recognition that uncertainty is part of knowledge

- Permission to question rather than comply

Transparency becomes relational.

It acknowledges that intelligence, artificial or human, operates within shared environments of trust.

When explanation disappears, so does that relationship.

Designing AI That Explains 🛠️

Creating legible systems is not primarily a technical challenge.

It is a design choice shaped by values.

Ethical AI requires commitments such as:

Visible Reasoning Paths 🧭

Show how outputs are formed in accessible language: what factors mattered, what patterns were detected, and why they were weighted.

Declared Uncertainty 📊

Communicate confidence levels and limitations instead of presenting conclusions as absolute.

Reversible Authority 🔁

Allow humans to question, adjust, or override system outputs.

Authority should remain collaborative, not final.

Contextual Grounding 🌍

Explain the data sources, assumptions, and boundaries that shaped the response.

These are not interface embellishments.

They are governance decisions embedded in design.

Why Obscuring Systems Scale Faster 🚀

There is a reason opaque systems proliferate.

They are easier to build, easier to productize, and easier to protect commercially.

They reduce friction, accelerate adoption, and minimize questions that might complicate deployment.

But what they gain in speed, they risk in trust. Systems that obscure their reasoning externalize uncertainty onto users while retaining control internally.

Efficiency without intelligibility can scale harm as quickly as it scales convenience.

AI Literacy as Shared Responsibility 📚

Transparency alone is insufficient if people are not equipped to engage with it.

Ethical AI requires a partnership:

- Designers must make systems understandable.

- Organizations must cultivate cultures where questioning is encouraged.

- Users must be invited into interpretation rather than positioned as passive recipients.

AI literacy is not about mastering technology.

It is about maintaining human agency within technological environments.

Conclusion: The Systems We Can Live With 🕯️

The future of AI will not be determined solely by how powerful these systems become.

It will be shaped by how readable they remain.

We must decide whether intelligence technologies will replace human judgment or support it, whether they will close conversations or deepen them.

The most ethical AI will not be the one that speaks the fastest or predicts the most.

It will be the one that continues to explain itself, even when it does not have to.

Because systems we cannot question are systems we cannot truly trust.

0 Comments

Leave a comment